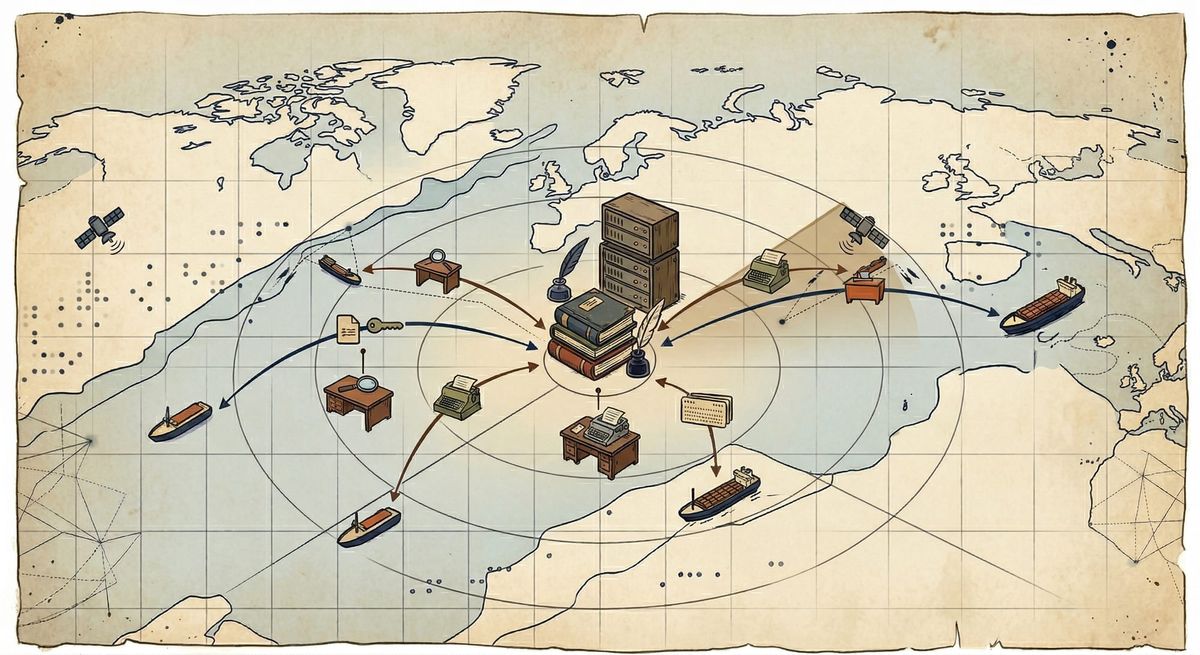

The maritime operational environment imposes severe constraints on distributed systems, characterized by high-latency satellite links, prolonged network partitions, rigid inbound firewall topologies, and asynchronous hardware lifecycles. Traditional cloud-native synchronization paradigms—which presume high availability, stable network partitions, and synchronous execution—are incompatible with this domain. This paper presents a comprehensive architectural framework for bidirectional relational metadata synchronization between a canonical cloud database and autonomous maritime edge nodes. By synthesizing the Transactional Outbox pattern, metadata-driven Change Data Capture (CDC), application-level topological sorting, schema-aware data quarantining, and Post-Quantum Cryptography (PQC), this architecture achieves deterministic eventual consistency. It details implementing a generic, memory-safe synchronization daemon capable of maintaining referential integrity and zero-trust security without compromising the operational autonomy of the disconnected edge node.

Source code for the sample project:

Codeberg.orgnauticalist

Codeberg.orgnauticalist

Introduction

Modern maritime operations depend on the continuous availability of digital metadata at the edge. Applications ranging from algorithmic voyage optimization to human resources and seafarer training management require localized compute resources that remain operational during prolonged satellite network blackouts.

When a vessel experiences a network partition, both the localized edge database and the shore-based cloud database operate as isolated, authoritative nodes. Concurrent modifications to overlapping datasets across these nodes produce split-brain scenarios. Under the constraints of the CAP theorem, the environment enforces a partition (P); thus, the system must prioritize Availability (A) over global Consistency (C). We must architect maritime data synchronization as an asynchronous, partition-tolerant (AP) distributed system. This study outlines a resilient framework for active-active bidirectional synchronization, prioritizing data integrity, deterministic conflict resolution, and autonomous edge operability.

Architectural Constraints and System Posture

The design of a maritime synchronization engine requires abandoning standard data-center assumptions in favor of deterministic, application-level state management. Four invariant infrastructural constraints govern the architecture:

- Topological Unreachability: Edge nodes reside behind strict maritime Network Address Translation (NAT) and perimeter firewalls. To protect Operational Technology (OT) networks, administrators prohibit inbound connection pooling. All network exchanges must be edge-initiated.

- Compute Footprint: Edge servers possess limited resource allocation, preventing the execution of resource-intensive JVM-based message brokers (e.g., Apache Kafka) or heavy observability sidecars.

- Immutable Edge Deployments: We cannot reliably service or update edge hardware. Software deployments result in unavoidable, prolonged schema drift between the canonical cloud database and legacy edge nodes.

- Cryptographic Longevity: Considering the lifespan of maritime metadata, we must secure transmitted data against “Harvest Now, Decrypt Later” (HNDL) cryptanalytic attacks using post-quantum primitives.

Data Capture and Causal Ordering

To minimize payload serialization over asymmetric satellite uplinks and prevent false data collisions, the system avoids transferring complete database rows, relying instead on intent-based synchronization via column-level deltas.

Generic Change Data Capture (CDC) and the Transactional Outbox

Traditional CDC mechanisms often require auxiliary infrastructure (e.g., Debezium) that introduces unacceptable operational overhead for edge deployments. Instead, this architecture implements CDC directly within the PostgreSQL relational engine. Developers define standard tracking columns (id via UUIDv7, sync_version, and an is_deleted tombstone) and attach a generic PL/pgSQL trigger to the target tables.

Upon any data modification, the trigger computes the delta between the transient row states, serializing only the explicitly modified columns into a generic JSONB payload. This payload is inserted into a localized outbox table. Because the business data modification and the outbox insertion occur within the same database transaction, the system strictly guarantees atomic, sequence-ordered event generation, mathematically eliminating the dual-write anomaly.

Conflict Resolution via Logical Clocks and Intent

In an active-active topology, relying on physical hardware timestamps for conflict resolution introduces a critical vulnerability because of unbounded clock drift on disconnected vessels. To achieve serializability, the system implements logical clocks (version vectors) via the sync_version integer.

Application-level configuration registries, which enforce strict field-level ownership, deterministically resolve concurrent modifications. If a shore-based administrator and a seafarer modify the same entity offline, the fields are structurally segregated (e.g., HR controls rank, the seafarer controls contact_number), preventing false collisions. The system strictly prohibits hard deletions, opting instead for cryptographic markers (is_deleted = true). This approach prevents the “deletion inversion” anomaly, where one node tries to merge an update into a record that another node has already purged.

Topological Sorting and Referential Integrity

A generic synchronization engine processes heterogeneous batches of mutations across multiple relational tables. Blindly applying these updates chronologically risks transient foreign key constraint violations (e.g., attempting to insert a child training certificate before its parent user record arrives).

While relational engines offer deferred constraint evaluation (e.g., DEFERRABLE INITIALLY DEFERRED), this obscures the precise origin of execution failures and degrades diagnostic observability. Instead, the architecture uses an application-level priority registry. Before initiating the database transaction, the edge daemon executes a two-dimensional, in-memory topological sort of the incoming batch—first by hierarchical dependency, and secondarily by chronological sequence. This deterministic execution ensures referential integrity prior to acquiring a database lock.

Transport Mechanics and Content-Based Routing

Because of strict maritime ingress firewalls, the cloud infrastructure cannot natively push events to the edge. The system abandons continuous streaming (e.g., gRPC, WebSockets) in favor of a piggybacked synchronization protocol started only by the edge node.

Piggybacked HTTP/3 Exchanges

The edge node transmits an outbound payload encompassing its local outbox events alongside its current High-Water Mark (Cursor), which represents the latest cloud sequence identifier it successfully processed. This exchange occurs over HTTP/3. By utilizing the underlying QUIC/UDP transport protocol, HTTP/3 provides robust multiplexing and rapidly recovers from the packet-loss characteristics inherent to high-jitter satellite communications, avoiding the head-of-line blocking associated with TCP.

The cloud node processes the edge telemetry idempotently, queries its own vessel-partitioned outbox using the provided cursor, and returns the pending cloud-to-edge mutations within the same HTTP response.

Split-and-Translate Fleet Routing

The centralized cloud operates as a content-based router. Rather than broadcasting global state, it isolates outbound events per vessel identifier. A complex distributed systems challenge arises during domain entity transfers (e.g., reassigning a crew member across vessels). The cloud router resolves this via a transactional saga that generates two orthogonal events: a soft-delete offboarding command routed to the origin vessel’s queue, and a complete structural baseline snapshot routed to the destination vessel’s queue.

Schema Evolution and Edge Autonomy

In a distributed fleet, achieving instantaneous global software parity is impossible. Cloud endpoints will invariably migrate to subsequent schema versions while remote vessels continue operating legacy software configurations.

Schema-Aware Quarantining

If an edge node receives a generic data payload containing newly introduced columns that do not yet exist in its local physical database, failing the transaction results in permanent data loss. Conversely, executing dynamic Data Definition Language (DDL) statements (e.g., ALTER TABLE) over an intermittent satellite link introduces catastrophic risks of database corruption.

To maintain an immutable edge architecture, the edge daemon uses boot-time schema discovery. Upon initialization, the daemon maps its physical PostgreSQL constraints into memory. Incoming generic payloads undergo column-level surgery: known columns apply to the active relational tables, while unknown attributes (future data) are extracted and stored in a localized schema_quarantine table using PostgreSQL JSONB concatenation (||). After a physical hardware or container update that adds the missing schema columns, the daemon will smoothly empty the quarantine vault, deterministically applying the historical data.

Air-Gapped Initialization (Sneakernet)

Initial vessel provisioning often exceeds workable satellite limits. The architecture supports physical media bootstrapping. The cloud infrastructure generates an encrypted database snapshot explicitly embedding the exact sequence cursor at the millisecond of compilation. Once the vessel's crew ingests the media locally, the synchronization daemon boots, reads the embedded cursor, and immediately resumes delta-synchronization over the satellite link, flawlessly bridging the temporal gap incurred during physical transit.

Cryptographic Framework and Disconnected Identity

Securing distributed maritime infrastructure requires decoupling network security from infrastructure-level transport layer security (mTLS), which is highly susceptible to middlebox inspection and certificate expiration during prolonged offline periods.

Two-Tiered Post-Quantum PKI

To prevent an offline vessel from permanently losing authentication access due to expired short-lived certificates, the edge daemon implements an autonomous rotation state machine based on Post-Quantum Cryptography (PQC).

- Trust Anchor: The vessel holds a long-lived, securely stored ML-DSA (Module-Lattice-Based Digital Signature Algorithm) private key.

- Operational Tokens: The daemon uses the ML-DSA key solely to sign cryptographic challenges over the network, exchanging them for short-lived, stateless JSON Web Tokens (JWTs). These tokens authenticate the high-frequency delta syncs.

Application-level hybrid envelope encryption achieves payload confidentiality. A post-quantum Key Encapsulation Mechanism (ML-KEM) secures an ephemeral symmetric key (AES-256-GCM), which encrypts the compressed binary payload, ensuring zero-trust routing across application gateways.

Edge Operationalization and Observability

To minimize the transmission size for eventual Over-The-Air (OTA) updates and eliminate the Common Vulnerabilities and Exposures (CVE) surface area of standard operating systems, developers implemented the edge synchronization daemon in the Rust programming language and cross-compiled it to a statically linked binary. The team deploys it as a “distroless” (scratch) Linux container.

Because distroless containers lack shell access for remote troubleshooting, we must treat observability with transactional rigor. The system uses a Telemetry Outbox paired with a Dead Letter Queue (DLQ). Leveraging Rust’s Resource Acquisition Is Initialization (RAII) memory model, if an inbound cloud event violates a local database constraint, the business transaction is explicitly and deterministically rolled back. Subsequently, a distinct database connection records the structured failure context into a local telemetry table. Shore engineers can access highly visible edge diagnostics without requiring auxiliary monitoring agents because this diagnostic payload piggybacks to the cloud during the subsequent network cycle.

Conclusion

Engineering distributed data systems for the maritime edge requires a fundamental departure from synchronous, infrastructure-dependent cloud paradigms. By treating the network as inherently hostile and relying on deterministic, application-level state management, organizations can achieve highly reliable data synchronization.

The architecture presented—synthesizing generic Change Data Capture, topological payload sorting, schema quarantines, and Post-Quantum cryptographic state machines within memory-safe execution constraints—demonstrates that strict relational integrity and operational autonomy are not mutually exclusive. Abandoning fragile constant-connectivity assumptions in favor of asynchronous, mathematically provable synchronization protocols establishes a robust foundation for the digitalization of the modern maritime fleet.